The Agentic AI narrative is currently just expensive lipstick on a fragmented pig.

Why the Current Narrative Will Disappoint (And What 2026–2030 Actually Looks Like)

The market is pricing in a simple story:

Agentic AI will autonomously orchestrate workflows across enterprise systems, driving 20–30% productivity gains, compressing timelines, and unlocking $2–4 trillion in enterprise value.

Palantir trades at 160x Free Cash Flow (FCF) PLTR 0.00%↑

Snowflake at 62x FCF. SNOW 0.00%↑

Databricks recently raised a Series K funding at a $134B valuation approx. 30x FCF.

ServiceNow NOW 0.00%↑ , Salesforce CRM 0.00%↑ , Workday WDAY 0.00%↑ and a dozen “AI orchestration” platforms are burning billions on R&D to own the agent layer.

The bet is binary: AI agents will work seamlessly across siloed systems, and whoever controls the orchestration layer owns enterprise AI.

This narrative is about to hit a wall.

And the wall has a name: the data fortress problem.

The Dotcom Parallel (Or: How I Know This Story Ends)

In 1999, everyone said:

“The internet changes everything. Offline retail is dead. Borders, Blockbuster, and mall-based commerce are done. The winners will be pure-play e-commerce platforms.”

The narrative was half-right. The internet did change everything.

But the winners weren’t pure-play platforms. They were companies that figured out how to bridge online and offline — Amazon AMZN 0.00%↑ eventually with logistics, Apple AAPL 0.00%↑ with stores, Walmart WMT 0.00%↑ with omnichannel.

The pure-plays (Pets.com, eToys, Webvan) failed because they ignored physical reality: you still need inventory, logistics, and real-world operations.

The story of agentic AI today mirrors this.

Current narrative:

“Agentic AI will orchestrate enterprise workflows via APIs and integrations. Palantir, ServiceNow, and cloud platforms will be the ‘Agentic AI winners.’”

The reality: Enterprise workflows aren’t separable from the systems and data models they live in.

Just like e-commerce couldn’t ignore inventory and logistics, agentic AI can’t ignore the fortresses built into ERP systems over 30+ years.

The Cloud Migration Parallel (Or: How Hybrid Always Wins)

In 2010, the narrative was:

“Cloud is cheaper, faster, and more scalable. On-premise is dead. Everyone will migrate everything to the cloud.”

A decade later, virtually no F500 company went “all cloud.” Instead, the story became “hybrid cloud.”

Why?

Because:

Some data and workloads are legally or operationally inseparable from on-premise infrastructure

Rip-and-replace migrations are risky and expensive

Organisations optimise for optionality, not purity

The winners in cloud weren’t companies that said “on-premise is dead.”

The winners were companies that said “let’s help you use both, optimally.”

AWS didn’t win by making on-premise irrelevant. It won by making it coexist with cloud.

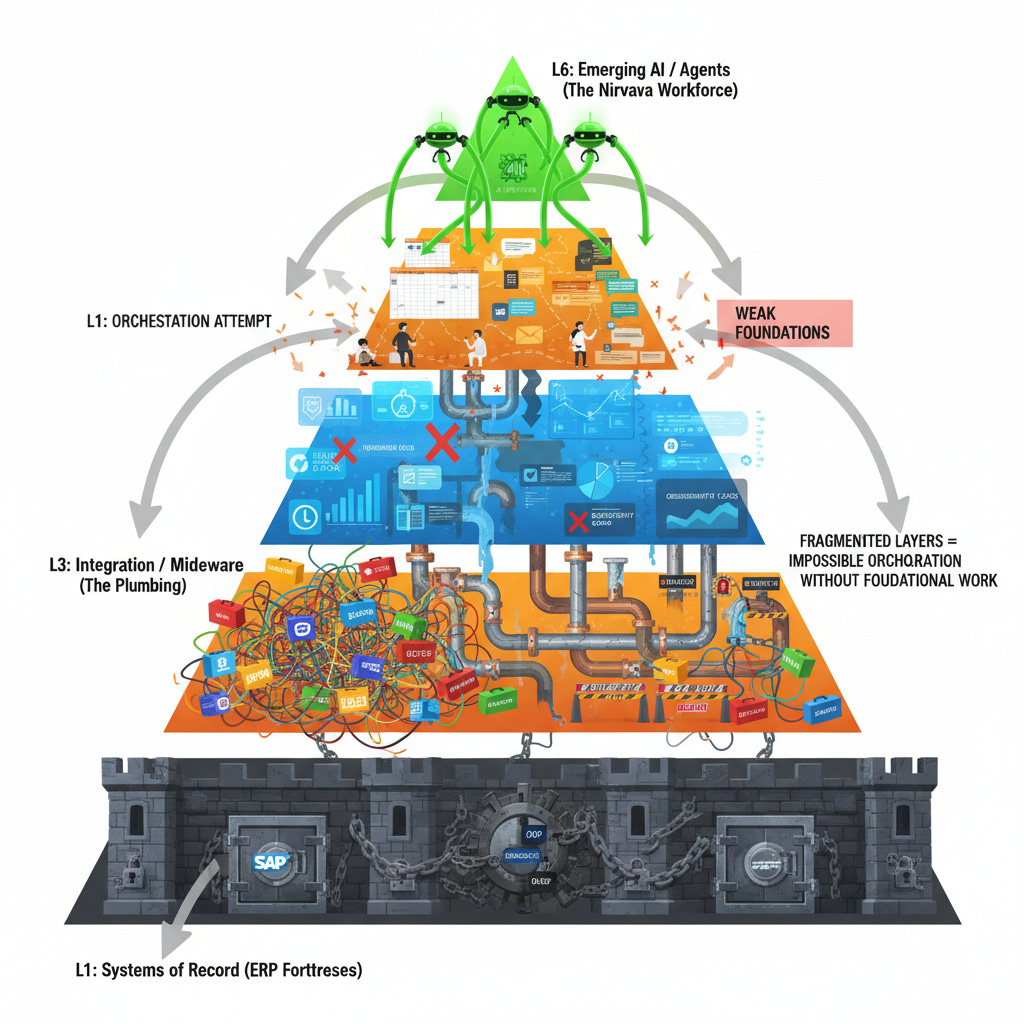

The Real Story: ERP Fortresses and the Myth of the Unified Agent

Here’s what the current investment thesis misses:

ERP Systems Are Not “Legacy Problems to Solve”—They Are Permanent

SAP, Oracle, Salesforce, and Workday are not going anywhere. They house:

Core customer, product, and transactional data (decades of inputs)

Mission-critical business logic (GL, AR/AP, demand planning, supply chain optimisation)

Compliance and audit requirements (GL posting, trial balance reconciliation, SOX controls)

Contractual and operational constraints (payment terms, margin rules, order logic)

They were built to be systems of record for good reason: consistency, auditability, and control matter.

But ERPs Also Are Data Fortresses

Here’s the problem:

SAP’s data model (the way it organises customers, products, orders, and transactions) is different from Oracle’s. And both are different from Salesforce’s.

More importantly:

SAP doesn’t want you to extract data fluidly

Their model assumes you run everything inside SAP, with SAP analytics on top. APIs exist, but they’re designed for “integration” (getting data to SAP), not “extraction” (getting data out cleanly).Oracle has the same fortress mentality

They want you to use Oracle Cloud Infrastructure (OCI), Oracle Analytics, Oracle AI. Cross-platform interop is possible but not prioritised.Salesforce actively discourages data portability

Salesforce Data Cloud and Einstein Copilot are designed to lock customers into the Salesforce ecosystem. Exporting clean data for use in a third-party orchestration system (like Palantir) is technically possible, but commercially discouraged.

The result: To orchestrate agentic workflows across a typical F500 company, you have to extract data out of fortresses, unify it (semantically and technically), and then give agents access to it.

That is precisely what the current investment narrative skips over.

The Use Case That Breaks the Current Narrative

Let me ground this in reality.

The Scenario: A Fortune 500 Company Wants to Automate Quote-to-Cash

The Business Goal:

Reduce quote-to-cash cycle from 45 days to 7 days. Deploy agentic AI to:

Take inbound customer requests (Layer 1)

Generate quotes automatically (Layer 2)

Get internal approvals (Layer 3)

Update CRM (Layer 2)

Manage contracts (Layer 2)

Create AR entries (Layer 1)

Track KPIs (Layer 4)

Alert humans when exceptions occur (Layers 4–5)

Sounds straightforward. The AI vendors will say “we can do this in 90 days.”

Now let’s map what’s actually required across the six layers.

Layer 1: Systems of Record (ERP Fortresses)

The Company’s ERP Stack:

SAP for Finance & Controlling: All GL, AR/AP, cost allocation, profitability ledgers

Oracle NetSuite for Billing & Revenue Recognition: Customer invoices, revenue GL posting, ASC-606 compliance

Salesforce for CRM: Customer master, opportunities, quotes

The Agent’s Needs:

To approve a quote, the agent needs to:

Validate customer creditworthiness (SAP Finance + Oracle AR)

Check customer profitability (SAP GL via custom views)

Confirm available capacity (Oracle order management tables)

Price appropriately based on customer tier and volume (SAP pricing master + Salesforce customer data)

Post AR entry once customer accepts (Oracle Billing)

The Problem:

Data model mismatch:

SAP’s “customer” is defined by vendor master ID

Oracle’s “customer” is a billing entity

Salesforce’s “account” is a relationship record

They don’t align. No shared key exists without custom mapping.

No clean APIs for ERP extraction:

SAP has OData APIs, but they’re read-heavy and rate-limited (100 calls/minute for most customers)

Oracle’s REST APIs are newer but don’t cover all data types (profitability ledger requires SQL queries)

Both require VPN access, identity management (SAML), and custom query logic

Business logic is buried:

Pricing rules: stored in SAP Pricing Master (customisable, unique to each company)

Approval thresholds: stored in Salesforce approval rules (declarative but not queryable externally)

Revenue recognition logic: embedded in Oracle Billing rules engine

The agent can’t access these rules directly: it has to call APIs that return outcomes (approved/denied), not reasoning.

Real-time constraints are strict:

Agents need to check creditworthiness in <500ms (customer expects quote in 2 minutes)

SAP batch credit check runs nightly (real-time checks are expensive add-ons)

Oracle AR is eventually consistent (data syncs from sub-ledgers overnight)

The agent can’t wait 24 hours; it has to make decisions on stale data

Time to fix Layer 1 for agent-readiness: 9–18 months, £4M–£10M

Layer 2: Point Solutions (The Sprawl)

The Company’s Stack:

Salesforce for CRM: quotes, opportunities, customer interactions

Stripe for payment processing: card processing, reconciliation, fraud checks

DocuSign for contract management: e-signature, audit trails, version control

Anaplan for financial planning: pricing scenarios, margin modeling

Zendesk for support: customer issues, escalations, resolution tracking

The Agent’s Needs:

To execute quote-to-cash, the agent needs to:

Create and version quotes (Salesforce)

Get contract signed (DocuSign)

Collect payment (Stripe)

Track customer satisfaction (Zendesk)

Update pricing models for next iteration (Anaplan)

The Problem:

API rate limits and orchestration hell:

Salesforce: 10,000 API calls/day (basic plan) or 100,000 (enterprise)

Stripe: 100 requests/second, but bursting into thousands of calls during batch processing hits limits

DocuSign: 50 API calls/minute per account

A single quote-to-cash workflow might require:

3 Salesforce calls (create quote, update opp, log activity)

1 Stripe call (validate customer payment method)

1 DocuSign call (send contract)

1 Anaplan call (update pricing model)

Multiply by 100 concurrent workflows (business hours) = you’re hitting rate limits constantly. Agents queue, retry, timeout.

No unified semantics:

Salesforce “opportunity” ≠ Anaplan “deal”

Stripe “customer” ≠ Salesforce “account”

DocuSign “document” ≠ a legal contract (it’s a signed artifact, not the source of terms)

Agents can’t reason across systems without extensive mapping.

Event orchestration is manual:

When DocuSign signs a contract, does Salesforce opp auto-close? (Depends on a Zapier zap that might be broken)

When Stripe payment succeeds, does Oracle AR posting trigger? (Requires custom middleware, usually via nightly batch)

Agents can’t wait for these events; they have to poll or make strong assumptions.

Duplicate and conflicting data:

Customer email is in Salesforce, Zendesk, and Stripe (which version is authoritative?)

Customer tier is in Salesforce, Anaplan, and SAP (they disagree because they update on different schedules)

Agent makes decisions on whichever data it sees first, risking inconsistency.

Time to fix Layer 2 for agent-readiness: 4–9 months, £2M–£5M

Layer 3: Integration / Middleware (The Plumbing)

The Company’s Current State:

Zapier for simple triggers (e.g., “new Salesforce opp → alert Slack”)

Mulesoft for SAP–Oracle middleware (older, runs on-prem, hard to change)

Python/Node.js scripts for data extractions (not documented, owned by ex-employees)

No API gateway, no central registry of integrations

What Agents Need:

To orchestrate quote-to-cash, the agent needs a coherent integration layer where:

Every system is discoverable (API registry)

Every call is logged and traceable (observability)

Rate limits and timeouts are managed (orchestration)

Failures trigger retries and human escalations (resiliency)

Access is controlled and audited (governance)

The Problem:

Integration layer is fragmented:

Zapier for point-to-point connections (no cross-system visibility)

Mulesoft for core integrations (proprietary, slow to change, expensive to scale)

Scripts for ad-hoc extractions (unmaintained, break when upstream changes)

Agents see a chaotic, unreliable landscape. They have to guess which integration to use and hope it’s current.

No API contracts:

SAP might change an OData endpoint (happens ~quarterly)

Salesforce deprecates an API version (they retire versions every 2 years)

Agents don’t know endpoints are stale until they call them and fail.

Observability is missing:

No central logging of integration calls

When a quote-to-cash orchestration fails at step 4 (e.g., DocuSign call times out), no one knows why

Agents can’t self-diagnose; they just fail and log “timeout”

Error handling is ad-hoc:

If DocuSign API is down, does the agent retry? How many times? How long to wait?

If Stripe payment fails (insufficient funds), does the agent escalate to human? (Depends on fallback logic that might not exist)

Agents need clear policies; most organisations don’t have them.

Time to fix Layer 3 for agent-readiness: 6–12 months, £3M–£8M

Layer 4: Analytics & Reporting (The Intelligence Cabal)

The Company’s Current State:

Tableau dashboards for executive reporting (refreshed daily)

Power BI for sales ops and finance (updated nightly)

Looker for custom analytics (real-time where possible, batch where not)

Custom SQL in data warehouse for ad-hoc analysis (slow to maintain, fragile)

What Agents Need:

To optimise and improve quote-to-cash, agents need:

Real-time cycle time metrics (Day 1 vs. Day 45)

Real-time customer profitability (which quote should we prioritise?)

Real-time pricing effectiveness (is this quote margin healthy?)

Real-time anomaly detection (this quote looks wrong, alert human)

The Problem:

Batch vs. real-time mismatch:

Dashboards refresh at 6am daily. Agents need data every second.

Quote-to-cash happens in minutes. A dashboard refresh 6 hours stale is useless.

Agents will make decisions on yesterday’s data, sub-optimally.

Lineage and quality issues:

Power BI pulls from SAP, Oracle, Salesforce

Data transformations are hidden in ETL logic (Informatica, custom scripts)

Agent can’t trace a metric back to source (violates auditability requirements)

Finance/Compliance won’t accept agent decisions based on opaque metrics.

Aggregation vs. transaction detail:

Dashboards show monthly/quarterly trends

Agents need transaction-level detail (this specific order, this customer, this margin)

Data warehouse has both, but querying from warehouse has latency (data arrives 4–6 hours after ERP posting)

Exception detection is manual:

“This quote is 30% below the target margin” is a metric humans check

Agents can calculate it, but they can’t decide whether to approve without clear policies

Most companies don’t codify these policies explicitly (hidden in “agent knowledge”)

Time to fix Layer 4 for agent-readiness: 3–6 months, £1M–£3M

Layer 5: Collaboration & Productivity (The Workarounds)

The Company’s Current State:

Excel for pricing exceptions (country managers manually override Anaplan)

Email for approvals (”Please OK this deal by EOD”)

Slack for urgent escalations (”This customer needs a 40% discount — deal’s in jeopardy!”)

PowerPoint for deal reviews (sales manager compiles deck, CFO reviews, approves)

What Agents Need:

To execute quote-to-cash autonomously, agents need to:

Accept approvals from humans (if customer demands terms outside policy)

Alert humans to exceptions

Provide explainable reasoning (”Why I’m recommending this margin”)

Get human veto capability (”No, I’m overriding your recommendation”)

The Problem:

Approval workflows are human-centric:

CFO expects a deal review meeting, not an automated “approved by agent” notification

Discount approvals are based on relationship context (”this customer is strategic”), not automated policies

Agents can’t replicate relationship judgment; they need human override.

Escalation paths are unclear:

If an agent gets stuck (e.g., customer demands 60-day payment terms, policy allows 30), does it escalate to sales leader? CFO? Credit committee?

Different deals follow different paths depending on size, customer, product (implicit, not coded)

Agents default to “ask human,” defeating automation value.

Audit and compliance requirements:

Every approval decision must be logged and explainable (”Why did we approve a discount?”)

Email chains and Slack messages aren’t audit-friendly

Agents need to post approvals to an audit trail, not leave them in Excel.

Time to fix Layer 5 for agent-readiness: 2–4 months, £500K–£1.5M

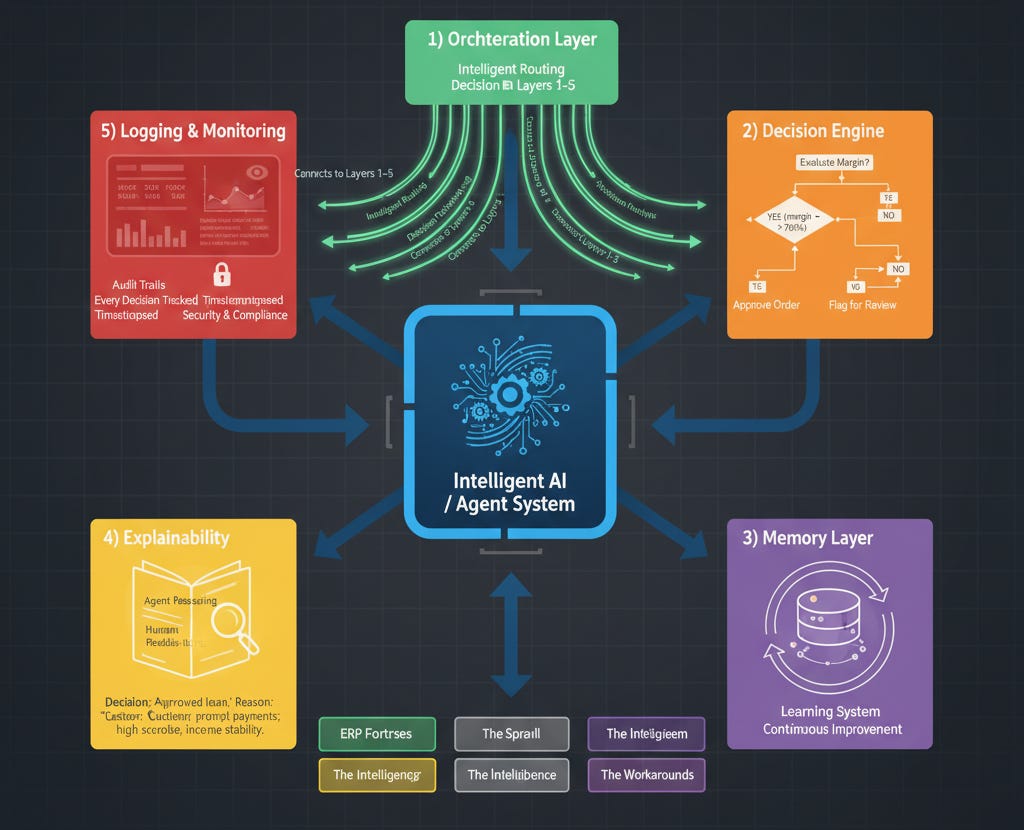

Layer 6: Emerging AI / Agents

What Needs to Exist:

An orchestration layer that calls Layers 1–5 intelligently

A decision engine with clear rules (approve if margin > 20%, customer credit score > 700, etc.)

A memory layer that tracks agent decisions and outcomes (so agents improve)

Explainability (agent can tell humans why it made a decision)

Logging & monitoring (every agent decision is auditable)

The Problem:

Agent development is isolated from infrastructure:

You can build an agent prototype in 4 weeks using LangChain + GPT-4

Deploying it to production (with governance, monitoring, escalation) takes 6+ months

Most companies see the prototype and overestimate readiness.

Agents inherit inherited system fragility:

If Layer 1–5 are unreliable, agents amplify the unreliability

Agent calls Salesforce API, gets stale data, makes wrong pricing decision, customer disputes it

Blame lands on “AI,” not on the fragmented data/integration layer below.

Data quality issues become agent quality issues:

30–40% of customer data in Salesforce is wrong (email, address, phone)

Agent pulls contact info, sends quote to wrong email

“The AI broke our customer relationship” (actually, the data was broken).

Time to build Layer 6 properly: 3–4 months, £500K–£1.5M

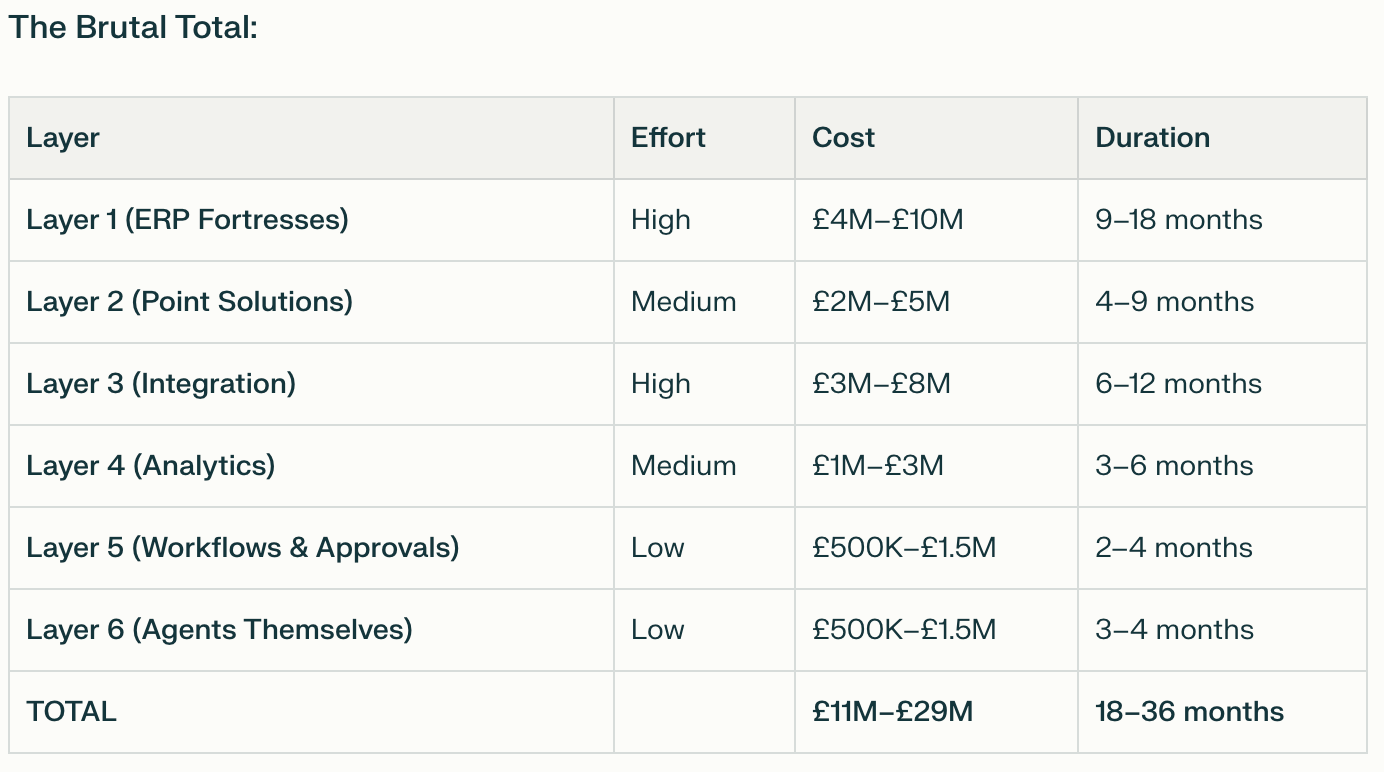

To automate one cross-functional process (quote-to-cash), a typical F500 company will spend £11M–£29M over 18–36 months.

Now the market is valuing agentic AI platforms as if enterprises will deploy 50 agents across 10 functions in 12 months.

Do the math: 50 agents × £20M per agent = £1B investment per company.

F500 companies don’t have that appetite.

Why the Current Narrative Will Fail: The Constraints

Constraint 1: True multi-platform agentic orchestration (via Palantir or ServiceNow) will remain niche. Platform-specific agents will proliferate (Salesforce agents, SAP agents, Oracle agents—running inside each silo).

SAP, Oracle, and Salesforce don’t want you extracting data cleanly to third-party orchestration layers (like Palantir).

They want you to stay inside their walled gardens:

SAP wants you in SAP Analytics Cloud + SAP AI

Oracle wants you in OCI + Oracle AI

Salesforce wants you in Salesforce Data Cloud + Agentforce

These vendors will:

Keep APIs limited (rate-limited, feature-gated)

Prioritise in-platform orchestration (Einstein Copilot, SAP Joule, Oracle Enterprise Data Platform AI)

Raise licensing costs for data export or third-party integrations

Constraint 2: Data Quality Ceiling - Fixing data at enterprise scale takes 12–24 months and £5M–£15M.

Even optimistic audits find 30–50% of core data is dirty (wrong formats, missing fields, duplicates).

Fixing data at enterprise scale takes 12–24 months and £5M–£15M.

Most companies won’t make that investment.

Instead, they’ll deploy agents on “good enough” data, get disappointing results, and scale back.

Constraint 3: Organisational Risk Aversion - The question every board will ask: “If an agent makes a £100K mistake, who’s liable?”

Agents making autonomous decisions in revenue, finance, HR, or supply chain terrify boards.

The question every board will ask: “If an agent makes a £100K mistake, who’s liable?”

No one has a good answer yet. So organisations will keep humans in critical loops, limiting agent autonomy (and value).

Constraint 4: Skill and Capacity Gaps

Designing agentic workflows requires hybrid skills: process design, data fluency, AI literacy, and engineering.

Most organisations don’t have these skills layered onto existing teams. Hiring or building them takes 12–18 months.

What 2026–2030 Might Actually Looks Like

The Narrative Reset (2026)

Palantir’s stock price will wobble.

ServiceNow’s agentic AI announcements will underwhelm Wall Street.

Salesforce’s Agentforce will see slower adoption than expected.

The market will realize: autonomous agents at scale require fixing the entire stack — and that’s expensive, slow, and organizationally risky.

The Architectural Shift (2026–2027)

Rather than cross-platform orchestration, the dominant pattern will be:

In-platform agents (faster, easier, lower risk):

Salesforce customers deploy Agentforce for sales/service workflows

SAP customers deploy SAP Joule for finance/supply chain

Workday customers deploy Workday agents for HR/finance

These agents work inside the platform, not across platforms

Hybrid orchestration (similar to hybrid cloud):

Organisations accept that some workflows are “ERP-centric” (run inside SAP, Oracle, etc.)

Other workflows are “integration-centric” (use middleware like Mulesoft, custom APIs)

Agents live at the edges, not in a unified “orchestration layer”

Managed services dominance:

Rather than “building agents,” organisations will buy pre-built, vertical agent templates

Veeva agents for life sciences

Procore agents for construction

Workday agents for HR/finance

These are 80% good enough, 20% customizable

The Real Winners (2027–2030)

Not “agentic AI platforms” in the abstract, but:

Vertical SaaS with embedded agents (Veeva, Procore, Workday, ServiceTitan)

Deep domain expertise + integrated data models = agents that work out of the box

Lower implementation cost + faster time to value

ERP vendors with strong in-platform orchestration (SAP, Oracle, Salesforce)

They control the core data; they’ll own the agents that touch that data

Agents that stay inside the fortress are cheaper, faster, lower-risk

Managed service providers (Accenture, Deloitte, Cognizant, TCS)

They’ll sell “quote-to-cash automation” as a managed service, not as “deploy agents yourself”

They’ll bundle implementation, data fixing, and ongoing monitoring

They’ll capture 70%+ of total value

Horizontal integration platforms (Mulesoft, modern iPaaS)

As organisations realize they need better integration, demand for middleware grows

Integration becomes a core strategic capability, not a cost center

The Agents That Will Actually Deploy (By 2028)

RPA + gen AI hybrids on well-defined processes (invoice processing, expense approval, ticket triage)

In-platform copilots (Salesforce copilot, SAP copilot) that augment users, not replace them

Vertical agents for industry-specific workflows

Narrow orchestrators (single workflows, single function) not “enterprise orchestration” platforms

What will not happen: Cross-platform, multi-agent, autonomous orchestration at enterprise scale.

Why? Because the prerequisites (clean data, semantic alignment, stable APIs, governance frameworks) are too expensive and risky to implement at the pace the market expects.

The Parallel to Cloud: Hybrid Always Wins

In 2010, the cloud narrative was pure: “On-premise is legacy; everything goes cloud.”

By 2020, every F500 company ran a hybrid stack: mission-critical systems stayed on-premise, new workloads went to cloud, everything else lived in the middle.

The companies that won weren’t those that said “on-premise is dead.”

The companies that won were those that said “let’s help you optimise across both.”

The agentic AI story will follow the same path:

2024–2025: Pure narrative — ”agents will orchestrate everything”

2026–2027: Reality collides with narrative — ”this is harder and more expensive than expected”

2028–2030: Hybrid model emerges—”in-platform agents + managed services + targeted integrations”

Dotcom Redux: Why the Lesson Matters

Pets.com had a great product and billions in funding.

But it ignored the underlying constraints of their supply chain: logistics is expensive, inventory management is complex, and same-day delivery wasn’t feasible in 2000.

When they hit those constraints, no amount of hype could override physics.

Agentic AI is hitting similar constraints now:

Data fortresses (SAP, Oracle, Salesforce) that won’t play nicely with orchestration platforms

Legacy integration that’s fragile and expensive to modernize

Data quality that’s worse than anyone admits

Organisational risk aversion that keeps humans in critical loops

The AI is great. The orchestration platforms are technically sound. But the underlying enterprise infrastructure wasn’t built for this use case.

And unlike Pets.com (which could theoretically rethink logistics), fixing decades of ERP complexity, data silos, and organisational structures is not a 12-month problem.

What Actually Happens in 2026–2030

Phase 1: Disillusionment (2026)

Palantir’s stock corrects 40–60% as analysts realize enterprise adoption is slower than expected

ServiceNow and Salesforce lower agentic AI guidance

Numerous “agentic AI” startups pivot or shut down

The headline switches from “agents will transform everything” to “agents have limitations”

Phase 2: Pragmatism (2027–2028)

Enterprise focus shifts from “cross-platform orchestration” to “in-platform agents + managed services”

Organisations invest in data foundations (not for agents, but for analytics and compliance)

Managed service providers quietly capture the largest deals

Vertical SaaS platforms integrate agents into their products (not visible to end-users, but powerful)

Phase 3: Optionality (2028–2030)

Hybrid orchestration becomes the norm: some workflows use in-platform agents, some use managed services, some stay manual

“Multi-agent orchestration” remains a capability for leading-edge organisations (top 20% of F500), not the mainstream

Agent technology matures and becomes cheaper (similar to RPA, which is now commoditized)

The narrative shifts: agents are one tool in the transformation toolkit, not the transformation

The Investment Implication

If you believe the timeline above, the trades are:

Be Cautious On (2026–2027)

Pure-play agentic AI orchestration platforms (Palantir, early-stage agent startups)

Too dependent on enterprises fixing their stacks first

Current valuations (15–50x revenue) don’t reflect the 18–36 month sales cycle

Expect multiple compression and guidance misses

Hyperscale cloud stocks on “AI-only” narratives

Azure, AWS, and GCP will grow regardless

But “agents driving compute consumption” is a 2028–2030 story, not 2025–2026

Expect patience required

Long On (2026–2030)

ERP vendors (SAP, Oracle, Salesforce) because in-platform agents + data control = defensible

Slower growth than the bull case suggests, but more durable

Multiple should be stable or slightly higher as agents prove themselves in-platform

Vertical SaaS with strong data models (Veeva, Procore, Workday, ServiceTitan)

Agents embedded in domain-specific tools = faster adoption, lower friction

Customers don’t need to think about orchestration; it’s built in

Managed service providers on a 24–36 month horizon (hidden winners)

They’ll quietly capture 60–70% of agentic AI transformation spend

Unlikely to be rewarded by the market for this, but cash flow will be strong

Integration infrastructure (Mulesoft, modern iPaaS, event-driven architectures)

As organisations realise integration is the constraint, demand grows

Not glamorous, but durable and high-margin

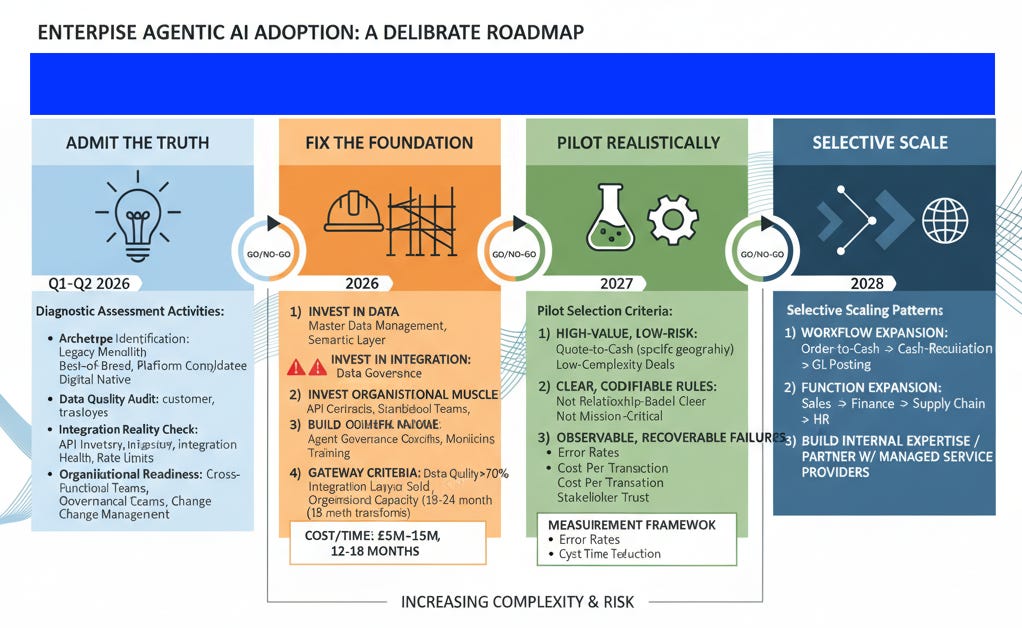

The 2026 Roadmap for Enterprises (The Realistic Version)

If you’re a CIO/CFO/CEO in 2026, here’s what actually needs to happen:

Phase 1: Admit the Truth (Q1–Q2 2026)

Commission an honest assessment:

What archetype are you? (Legacy Monolith, Best-of-Breed Sprawl, Platform Consolidator, Digital Native)

What’s your actual data quality? (Audit core entities: customer, product, transaction, employee)

What’s your integration reality? (API inventory, integration layer health, API rate limit usage)

What’s your organisational readiness? (Do you have cross-functional agent teams? Governance frameworks? Change management capability?)

Phase 2: Fix the Foundation (2026–2027)

If you have >50% of the problems below, do not deploy agents yet:

Data quality <70% on core entities

Integration layer that’s fragile or unmaintained

No clear governance model or accountability for agent decisions

Organisational inability to run 18–24 month transformations

Instead:

Invest in data: Master data management, semantic layer, data governance

Invest in integration: Build API contracts, standardize integration patterns, establish monitoring

Build organisational muscle: Create cross-functional teams, establish agent governance councils, train stakeholders

This phase takes 12–18 months and costs £5M–£15M. There are no shortcuts.

Phase 3: Pilot Realistically (2027–2028)

Once the foundation is solid, pilot agents on:

High-value, low-risk workflows (quote-to-cash in a specific geography, low-complexity deals)

Processes with clear, codifiable rules (not those requiring relationship judgment)

Workflows where failures are observable and recoverable (not mission-critical paths)

Measure:

Cycle time reduction (quote-to-cash down from 45 days to 7 days?)

Error rates (do agents make fewer mistakes than humans?)

Cost per transaction (are agents cheaper than manual labor?)

Stakeholder trust (do users trust agent recommendations?)

Publish results. Use them to fund Phase 4.

Phase 4: Selective Scale (2028–2030)

If pilots work:

Extend to adjacent workflows (order-to-cash → cash-to-reconciliation → general ledger posting)

Expand to new functions (Sales → Finance → Supply Chain → HR)

Build in-house expertise or partner with managed service providers for ongoing optimization

Scale is selective, not wholesale. You’re not automating “everything”; you’re automating workflows where agents have already proven themselves.

The Contrarian Thesis (For 2026 and Beyond)

The market thinks: Agentic AI will autonomously orchestrate enterprise workflows by 2026–2027, driving 20–30% productivity gains and unlocking trillions in value.

The reality is: Agentic AI will work exceptionally well in narrow, well-defined domains (vertical SaaS, in-platform agents, managed services). Multi-platform enterprise orchestration will remain niche, expensive, and risky.

The timeline is: 2026 disillusionment, 2027–2028 pragmatism, 2028–2030 selective adoption in leading organisations.

The implication is: The stocks with the most hype (Palantir at 50x revenue) will disappoint. The stocks with strong foundations (ERP vendors, vertical SaaS, managed service providers) will quietly deliver better returns.

This is not a bearish thesis on AI. It’s a realistic thesis on enterprise adoption speed and cost.

The Dotcom bubble wasn’t wrong that the internet would change everything. It was wrong about the timeline and the path.

Agentic AI will change enterprise operations. But not in 12 months. Not with a single “orchestration platform.”

And not without fixing the messy, expensive, unglamorous foundational work first.

That’s the contrarian 2026–2030 roadmap.

Closing: What the Market Will Learn

By 2030, the investment community will understand what consultants already know:

Enterprise transformation is not about the fanciest tool. It’s about data, integration, governance, and change management.

The fanciest tool (agentic AI) matters once those foundations are solid.

Before that, it’s just expensive lipstick on a fragmented pig.

The winners won’t be the companies that promise the most hype.

They’ll be the companies that are honest about the prerequisites, disciplined about execution, and patient with adoption.

That’s a much less exciting narrative.

But it’s the one that makes money.

Read my next essay on ‘Can the Palantir Playbook solve the Agentic AI dilemna?’ publishing in a couple of days.