Why Agentic AI Transformation Fails Without Disciplined Programme Management

A pragmatic framework for moving beyond pilots into production, a strategy-to-execution playbook for translating ambition into measurable business outcomes from a seasoned Programme Director.

Executives are fond of quoting hockey great Wayne Gretzky, who is credited with saying: “I skate to where the puck is going to be, not where it has been.” This is sound business advice at one level. But that puck is moving a whole lot faster than it used to as agentic AI rapidly evolves.

McKinsey Quarterly, October 2025

Why Agentic AI Is a Programme, Not a Tool

The arrival of agentic AI systems that can reason, plan, and execute tasks with minimal human intervention is a fundamental shift in how organisations operate, compete, and create value. Yet across boardrooms and delivery floors alike, a worrying pattern emerges: executives champion AI transformation, technology teams build impressive demonstrations, and business units remain sceptical. Pilots proliferate. Benefits remain elusive. Value stays theoretical.

This disconnect is a failure of approach, not ambition or capability. Many organisations treat agentic AI as a technology experiment to be owned by data scientists and innovation labs. They fund isolated proof-of-concepts, celebrate technical achievements, and wonder why operational impact never materialises. The answer is straightforward:

Agentic AI is a strategic capability to be embedded and a transformation to be orchestrated, not a tool to be deployed.

Agentic AI represents a transformation challenge masquerading as a technology deployment. Over the past 18 months, I am involved with organisations across the financial services, professional services, and digital sectors launching countless proof-of-concepts, pilot programmes, and departmental experiments. Yet nearly 80 per cent of companies deploying generative AI report no material impact on earnings. This is not a procurement problem. It is a programme management problem.

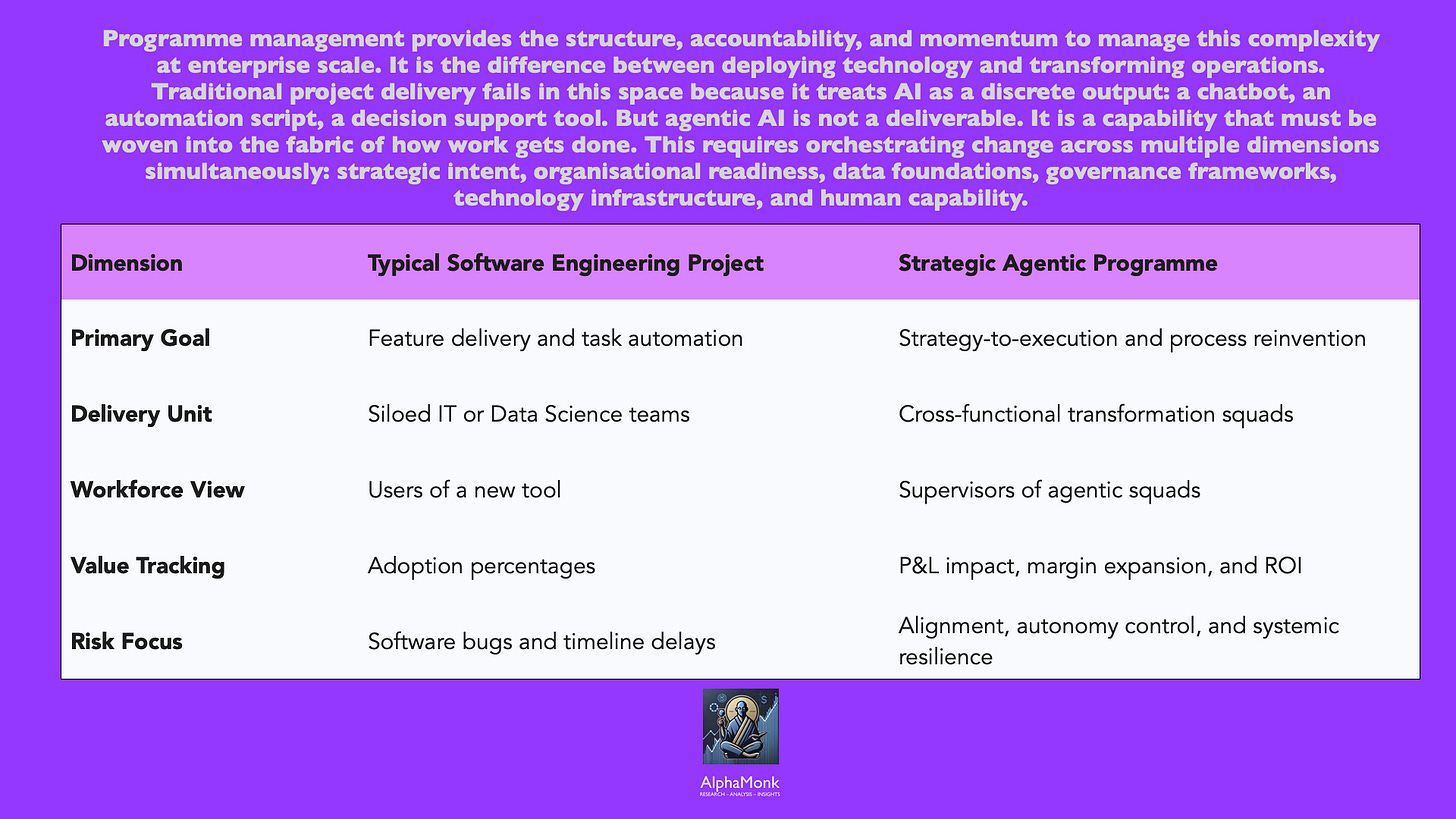

The traditional project delivery model fails in this context. Projects are scoped around discrete deliverables: build the system, test the system, deploy the system, move on. Agentic AI demands something different. It demands a programme mindset — one that starts with strategic intent, translates intent into process redesign, orchestrates technology enablement alongside organisational change, and measures value realisation in operational terms, not system completion terms.

This is precisely where Programme Management discipline becomes indispensable. A Programme Director sits at the nexus of strategy, operations, technology, and people. Their role is to translate corporate ambition into actionable work, to align competing stakeholder interests, to manage risk and uncertainty, and to ensure that value is realised and sustained. It is an essential role in the agentic era.

Navigating the Hype Cycle: Separating Signal from Noise

The Programme Director role is to ensure that ambition is matched by execution capability, to translate strategic excitement into pragmatic, measurable progress. This requires distinguishing between what is technically possible, what is operationally viable, and what is strategically valuable.

The current market is saturated with "vendor-style optimism," where every software update claims to be "agentic." On one side, vendors and technology evangelists promise transformative productivity gains: 40 to 60 per cent reductions in cycle time, autonomous agents managing entire workflows, and the reimagining of how work gets done. On the other, sceptics point to the GenAI paradox and question whether the returns will ever materialise.

The truth lies in the specificity of application, not in the technology itself.

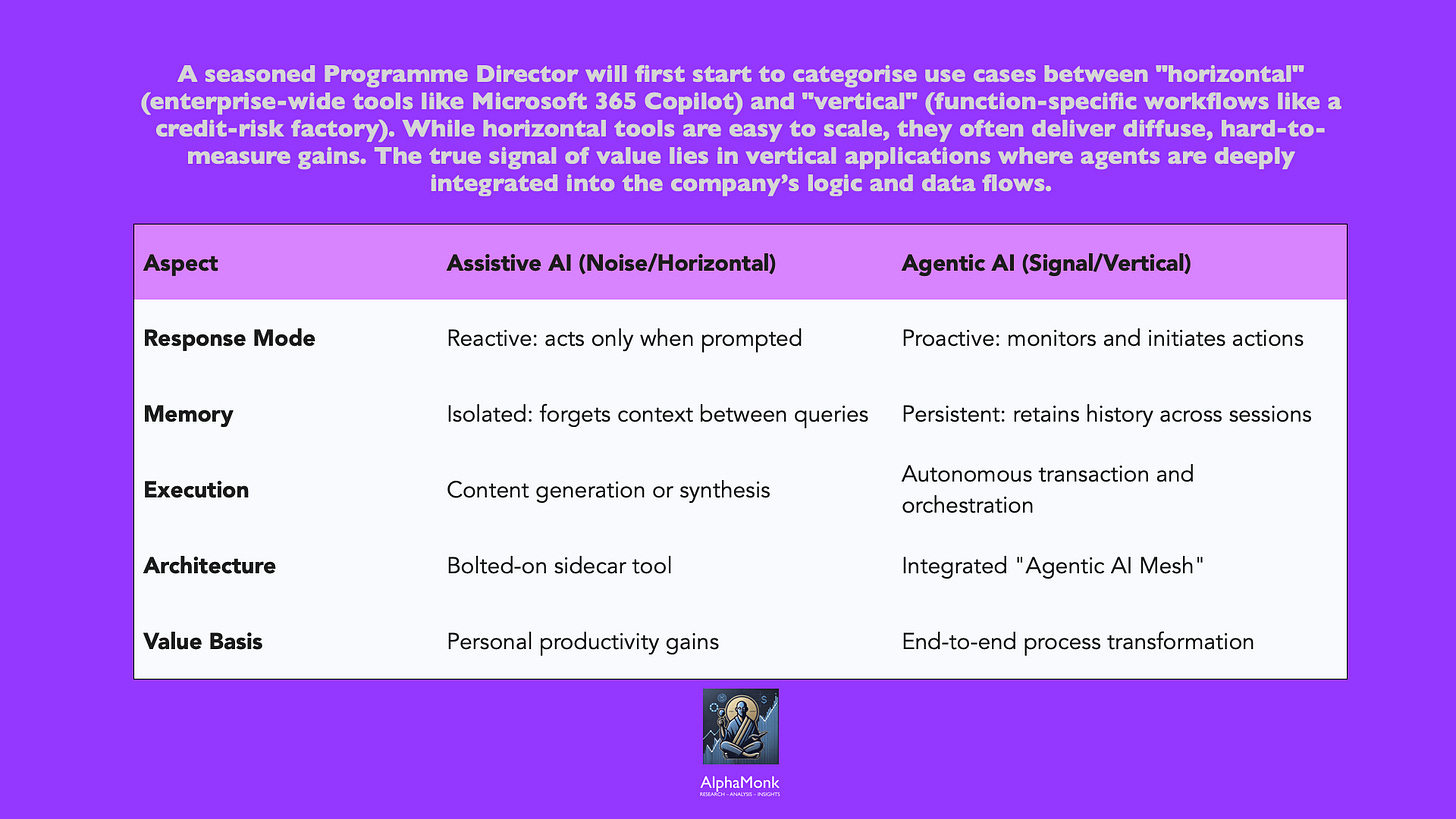

For a seasoned Programme Director, the first task is to create a decision discipline that cuts through this noise. We must distinguish between signal — the genuine operational breakthroughs of autonomous systems — and noise — the repackaged chatbots that remain fundamentally reactive. Assistive AI, such as simple copilots, requires constant human prompting and handles isolated tasks; in contrast, Agentic AI sets its own sub-goals, accesses necessary tools via protocols like the Model Context Protocol (MCP), and adapts its path based on real-time feedback.

Most generative AI deployments to date have focused on horizontal use cases —enterprise copilots, chatbots, and assistive tools deployed across the entire workforce. These are accessible, easy to implement, and widely adopted. Yet they deliver diffuse, hard-to-measure gains. A knowledge worker who saves 5 per cent of their time by using an AI assistant each week does generate value, but that value is diluted across hundreds of individuals and becomes invisible at the P&L level.

Vertical use cases — those embedded into specific business functions and core processes — offer far greater potential. An autonomous agent managing customer service interactions, orchestrating procurement workflows, or processing financial transactions can reduce cycle time by 60 to 90 per cent, cut transaction costs by 40 to 80 per cent, and fundamentally reshape how that function operates. Yet fewer than 10 per cent of such pilots make it to production at scale.

The distinction between assistive AI and agentic AI is equally crucial. Assistive AI augments human decision-making: it generates options, provides insights, and recommends actions, but humans retain control. Agentic AI operates differently. It sets goals, breaks them into subtasks, executes actions across systems, remembers context, and adapts in real time — often without waiting for human instruction. This shift from reactive to proactive, from tool to actor, is the point at which risk, governance, and organisational readiness become non-negotiable.

A Programme Director navigates this landscape by anchoring decisions in business intent, not technology capability. The question is never “What can the latest AI model do?” but rather “What business problem are we solving, and at what level of agent autonomy is that problem best solved?” This discipline cuts through vendor claims and internal enthusiasm alike.

Addressing Colleague Scepticism and Fear of Displacement

Agentic AI often triggers an immediate defensive response from the workforce. In the UK, over 27 percent of employees express fear that their jobs will disappear within five years. There are legitimate concerns about "ladder-kicking," where junior roles are automated, preventing the next generation from gaining foundational experience. As a Programme Director who has mediated cross-culture delivery friction and aligned with union representation at organisations like the Royal Mail and Transport For London, I know that trust cannot be an afterthought; it must be the foundation of the programme.

The history of technology transformation offers cautionary lessons. Contact centre automation did reduce headcount. Process standardisation did eliminate specialist roles. Performance monitoring systems did create surveillance cultures.

Colleagues are right to be wary.

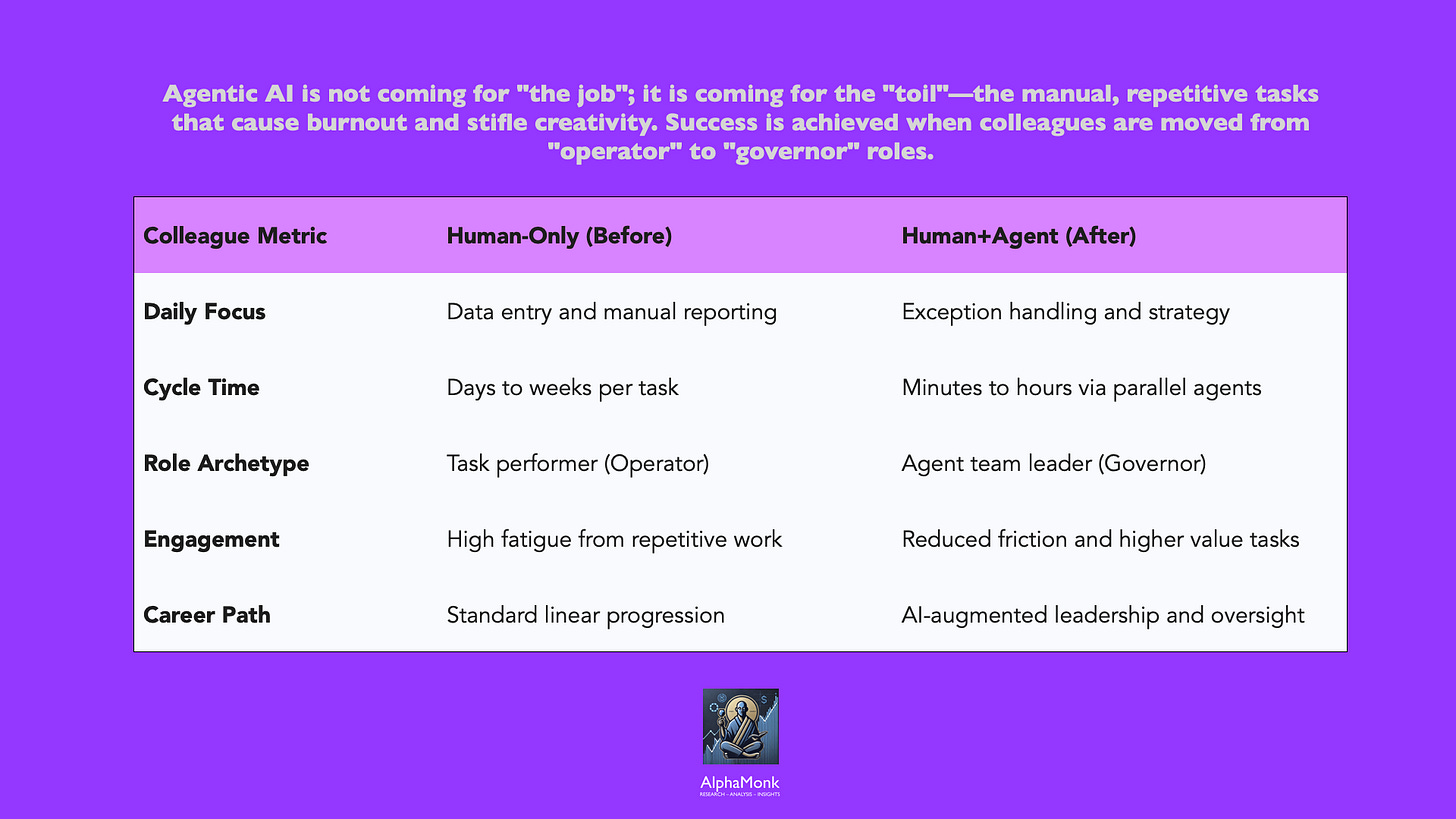

Agentic AI will displace certain categories of work. This is not hyperbole; it is inevitable. The Programme Director’s responsibility is not to deny these risks but to shape how AI is deployed so that it genuinely augments human capability rather than simply replacing it.

In contact centres deploying agentic systems, agents transition from handling routine calls to managing exceptions, coaching systems, and handling the highest-friction customer scenarios.

In finance teams, staff transition from manual reconciliation to analytical oversight and strategic planning.

In software development, engineers shift from coding boilerplate to architectural design and code review.

The throughput increases dramatically, but the composition of the workforce changes.

This requires transparency and intentionality. Organisations that succeed are those that:

Reframe the narrative from replacement to amplification. Rather than “AI will do your job,” the message is “AI will remove friction from your work, allowing you to focus on what matters.” This is only credible if true — and only true if work is genuinely redesigned.

Co-create process design with frontline teams. When agents are inserted into workflows designed by technologists without input from the people who do the work, adoption fails and scepticism deepens. When process redesign happens in partnership between operations, technology, and the teams affected, buy-in increases and design quality improves.

Establish clear boundaries between human and agent decision-making. Colleagues need to understand where agents act autonomously and where humans retain authority. This is not about limiting agent capability; it is about clarity. When a customer service agent can resolve 80 per cent of queries autonomously but escalates complex cases to humans, staff understand the boundary. When it is ambiguous, trust erodes.

Create visibility into decision-making. As agents become more autonomous, colleagues need to understand how decisions are made, where bias might creep in, and where human oversight is embedded. This is as much about building trust as it is about compliance. Explainability systems, audit trails, and regular model performance reviews are not technical niceties; they are foundational to adoption.

The colleague experience improves not when AI removes judgment from work, but when it removes friction. A finance analyst who previously spent 60 per cent of their week on data gathering and validation, and 40 per cent on analysis and insight, can now allocate 20 per cent of their time to data oversight and 80 per cent to analysis. This dramatic improvement in colleague experience — reduces burnout, eliminates tedious tasks, feel more capable, more valued, and more able to contribute meaningfully, provided it is designed intentionally and communicated clearly — transforms scepticism into advocacy.

Translating Strategy into a Coherent AI Programme

Executive strategy typically articulates high-level ambitions:

grow revenue by entering adjacent markets, expand margins through operational efficiency, enhance customer loyalty through personalised experiences, improve employee engagement by removing bureaucratic friction.

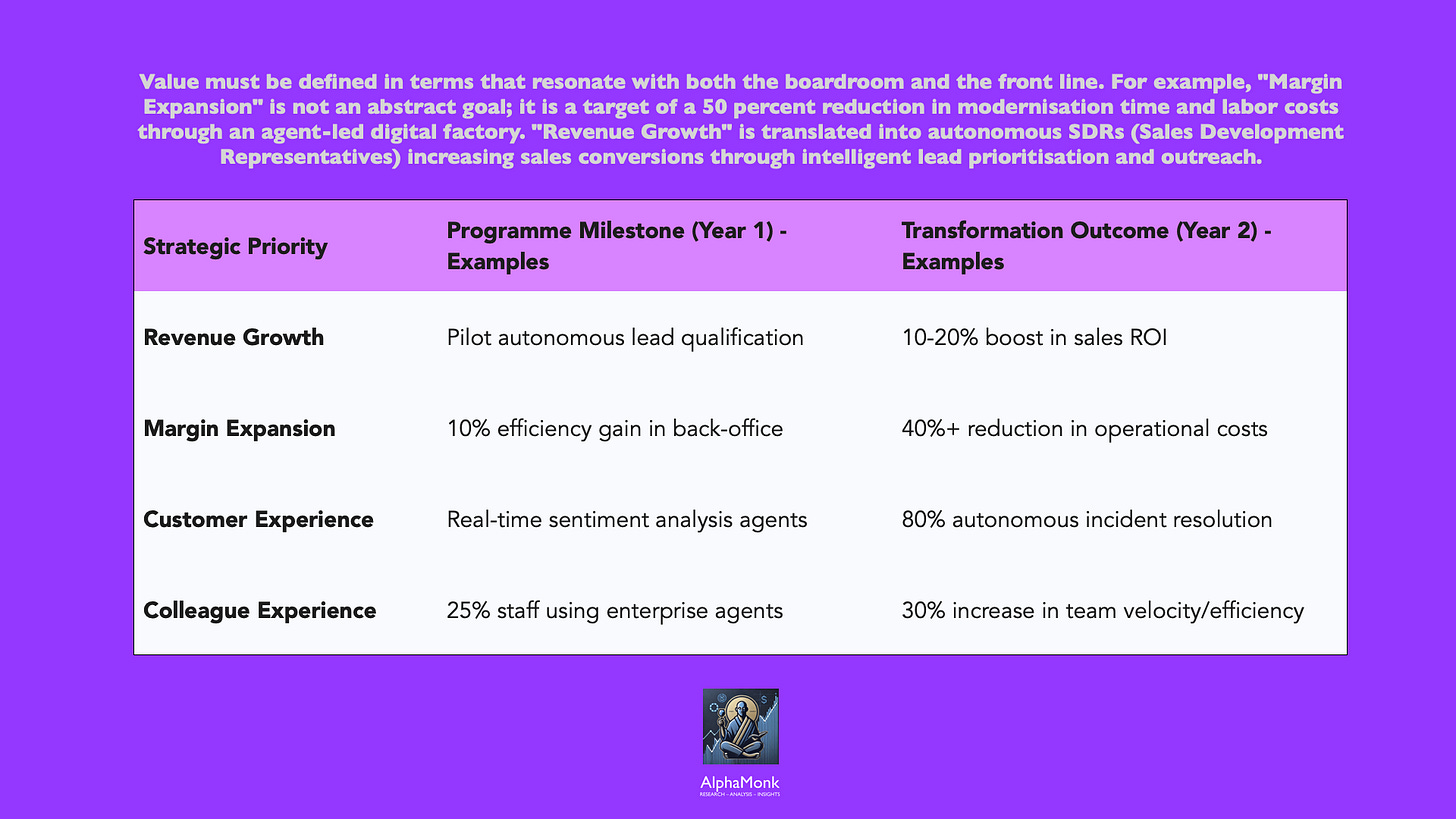

Agentic AI can plausibly contribute to all of these goals. The challenge is translating intent into actionable programmes with clear outcomes, accountable ownership, and measurable impact.

Revenue growth means faster time-to-market, increased win rates, higher customer lifetime value, and access to new market segments. Margin expansion means lower cost-to-serve, higher asset utilisation, and improved pricing power. Customer experience means faster issue resolution, reduced friction, increased personalisation, and higher satisfaction scores. Colleague experience means lower cognitive load, greater autonomy, clearer mastery of core tasks, and reduced time spent on administrative work.

A Programme Director translates these into process-level outcomes and identifies where agentic AI can move the needle.

For revenue growth:

Where are extended sales cycles blocking growth? Can autonomous agentsqualify leads, gather client intelligence, and prepare sales teams in ways that compress cycle time? Can agents personalise offers at scale, increasing conversion and upsell? Can they monitor customer behaviour in real time and recommend next-best actions?

These are not hypothetical; they are operational realities in leading organisations.

For margin expansion:

Where is cost structure driven by labour-intensive, repetitive work?

Procurement, transactional finance, claims processing, and customer service operations are prime candidates. An agent orchestrating procurement workflows can reduce time-to-approval by 60 per cent and aggregate buying power across decentralised teams. Agents managing claims can reduce manual handling time by 70 per cent whilst improving accuracy and compliance.

For customer experience:

Where does friction exist in customer journeys?

Check-in, account opening, complaint resolution, and service provisioning are typically fragmented across channels and systems. Autonomous agents that proactively detect issues, diagnose problems, initiate solutions, and communicate progress can reduce resolution time from days to hours and improve satisfaction in parallel.

For colleague experience:

What tasks consume cognitive energy without adding value?

Data gathering, report generation, status tracking, and approval coordination are prime candidates. Agents that automate these create cognitive space for strategic thinking and relationship management.

The programme structure connects these operational outcomes to initiative portfolios. A Programme Director establishes a programme governance layer that spans business, technology, data, operations, and change. This layer ensures that initiatives are aligned to strategic priorities, that technical dependencies are managed, that data is treated as a shared asset, and that change management is coordinated across workstreams.

“AI is bolted on. But to deliver real impact, it must be integrated into core processes, becoming a catalyst for business transformation rather than a sidecar tool. PMost deployments today use AI in a shallow way—as an assistant that sits alongside existing workflows and processes—rather than as a deeply integrated, engaged, and powerful agent of transformation.”

Arthur Mensch, CEO of Mistral AI

Critically, the programme operates on a principle of end-to-end process ownership, not isolated use-case delivery. Rather than “Deploy an AI chatbot for HR queries” (an isolated use case), the programme frame becomes “Redesign the employee HR experience end-to-end, embedding agents into onboarding, benefits administration, leave management, and performance support” (an integrated process). The difference in impact is proportional to the difference in scope.

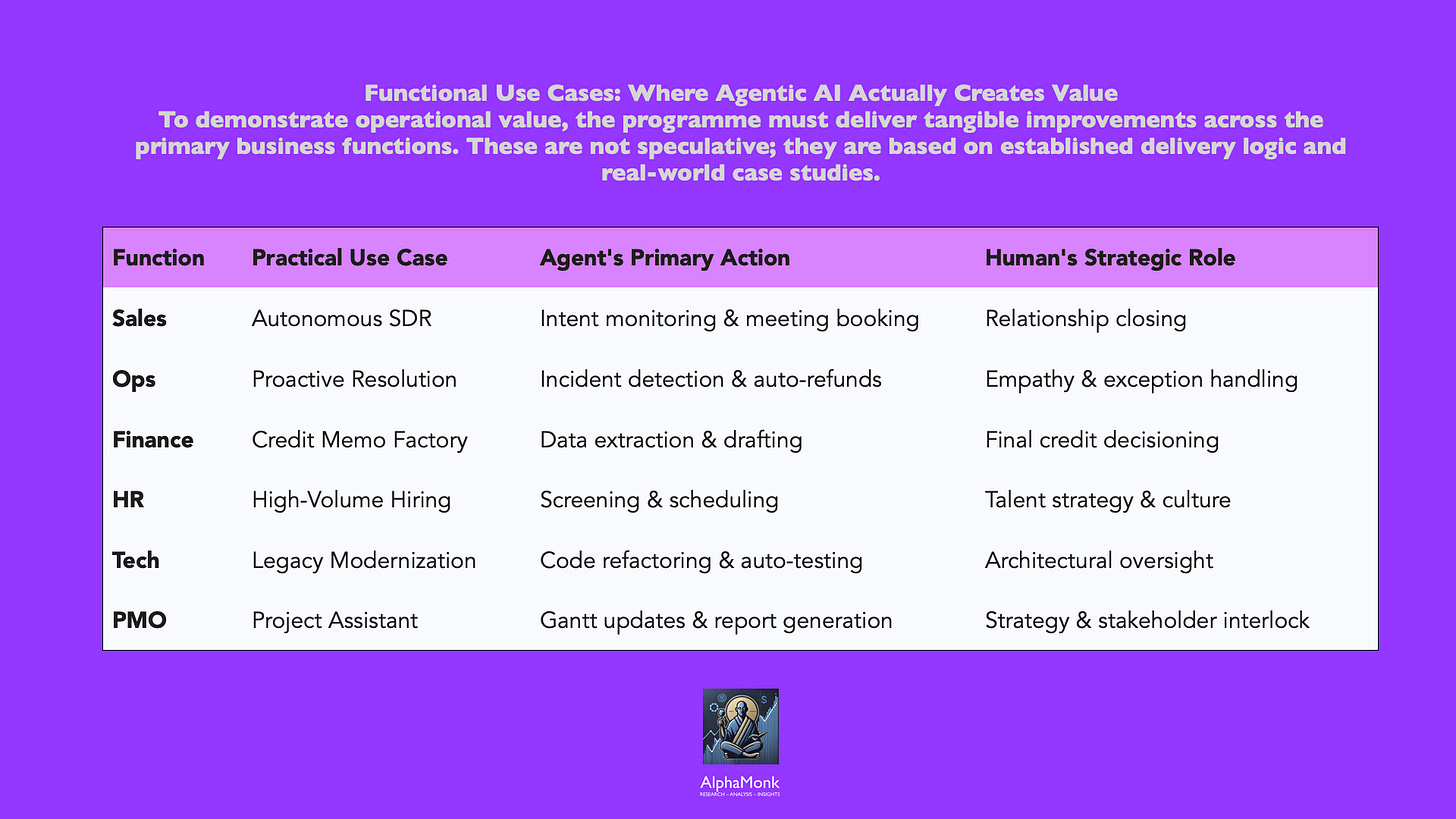

Functional Use Cases Where Agentic AI Can Deliver Measurable Value

The most credible way to cut through scepticism is to ground discussion in realistic use cases, not science fiction.

Commercial & Sales Operations: Sales teams operate in environments of information overload: CRM records, market intelligence, product catalogues, pricing policies, competitive positioning. Autonomous agents can act as an intelligent sales assistant to monitor deal pipelines, flag at-risk opportunities, prepare sales collateral, conduct competitive intelligence, and recommend negotiation levers. In early implementations, this has reduced sales cycle time by 20 to 40 per cent for complex deals and increased forecast accuracy by flagging realistic conversion probabilities in real time. The boundary is clear: agents recommend, qualify, and orchestrate; humans own, decide, negotiate, and close.

Customer Service & Operations: Customer service has long been a target for automation, yet most chatbots fail because they cannot handle ambiguity or escalate gracefully. AI Agents can detect issues proactively (delayed shipment, failed payment, service outage), diagnose root causes, initiate resolution autonomously (issue refund, reorder item, update account), and communicate progress to customers. In high-volume service environments, agents can resolve 60 to 80 per cent of routine requests autonomously, with human agents focused on exceptions and escalations. Cycle time drops from days to hours; satisfaction remains stable or increases because routine issues are resolved faster and complex issues receive more focused attention. Colleagues benefit because they handle fewer routine cases and can focus on situations that require empathy, creativity, or judgement.

Finance & Risk: Finance teams spend considerable effort on data reconciliation, variance analysis, and regulatory reporting: tasks that are procedurally complex but cognitively routine. Credit risk assessment, claims adjudication, regulatory reporting, and financial forecasting are traditionally human-intensive and prone to inconsistency. Agentic AI can automate end-to-end workflows to extract data from multiple sources, analyse trends, flag anomalies, and draft decisions for human review. In one bank, autonomous agents supervised by credit officers increased credit memo productivity by 20 to 60 per cent and reduced turnaround time by 30 per cent whilst improving decision quality through consistent application of policy.

At one of my clients — a FinTech specialising in financial crime products, autonomous agents supervised by compliance officers increased transaction screening productivity by 20 to 60 per cent and reduced false positive investigation time by 30 per cent whilst improving detection quality through consistent application of regulatory policy.

Working directly on an Anti-Money Laundering, Payment Fraud, and Sanctions Screening programme, my programme implemented agentic AI to autonomously scan and classify transaction records across our compliance workflow. The agents were designed to execute the initial triage — flagging suspicious transactions, cross-referencing sanctions lists, and identifying fraud patterns — whilst remaining under the supervision of our compliance officers.

The impact was transformational. By automating the labour-intensive data gathering and pattern matching that previously consumed 60–70 per cent of analyst time, we achieved a significant reduction in false positives across all three use cases. The agents applied regulatory policy consistently, eliminating the variability that occurs when human analysts manually process high-volume transaction streams under time pressure.

What was particularly striking was the quality improvement, not just the efficiency gains. Compliance officers were freed from repetitive screening tasks and repositioned as decision-makers and exception handlers. They reviewed agent-generated assessments, exercised judgment on edge cases, and maintained oversight of the system’s reasoning. The 30 per cent reduction in turnaround time for escalations meant we could respond to regulatory inquiries faster whilst maintaining audit integrity.

The programme demonstrated that agentic AI’s value in compliance is not replacement of human judgment, but augmentation of human capacity through consistent, policy-aligned automation.

HR & People Operations: Human resources functions manage significant administrative complexity: recruitment, onboarding, leave management, learning recommendations, and succession planning involve significant administrative overhead. An agentic AI assistant can screen CVs, coordinate interviews, manage onboarding checklists, recommend training aligned to development plans, and surface retention risks. When integrated end-to-end, the employee experience improves (faster onboarding, more personalised development), and the HR team’s capacity expands dramatically to focus on strategic initiatives such as talent development, organisational design, and culture building.

Technology & Engineering: Software development teams face mounting pressure to deliver faster whilst maintaining quality and security. Code generation, architecture review, testing, and release management are areas where agents have demonstrated remarkable capability. Agents can write boilerplate code, execute unit tests, identify security vulnerabilities, and manage deployment pipelines. The impact on cycle time and quality is substantial, but only when engineering teams shift from writing code to architecting solutions and reviewing agent-generated output. This requires a fundamental shift in how engineers work, and that shift is the programme management challenge, not the technical challenge.

Since 2023, I have encouraged all my technology teams to treat Copilot as a peer programmer, using it by default for code generation, refactoring, and test creation to lift engineering velocity and quality. At a travel logistics software provider operating a 30‑year‑old monolithic platform for airline customers, the engineering leadership initially produced a 36‑month roadmap to refactor the codebase into a containerised microservices architecture to reduce cost to serve and accelerate feature delivery. I incubated a small squad of ML and Java engineers and set up an agentic pattern in which Copilot‑driven agents analysed legacy modules, proposed target service boundaries, generated refactored code stubs, produced unit and integration test suites, and prepared draft CI/CD pipeline configurations for each epic and use case. Engineers then worked as supervisors rather than sole authors: reviewing agent‑generated diffs, tightening security and performance, and curating reusable prompts and patterns so the agents could be reapplied across adjacent domains in the platform. By industrialising this agent‑assisted “modernisation factory” model, we cut the roadmap from 36 months to roughly 12–14 months, while increasing confidence in regression coverage and the reliability of each release.

Project, Programme & Portfolio Management itself: A meta-point worth noting: Programme Managers can deploy agents to do much of the work that consumes their own time. Large programmes generate vast amounts of information: status reports, risk registers, dependency matrices, resource plans. An AI agent can monitor progress across initiatives, flag risks before they escalate, consolidate status reporting, track dependencies, and recommend corrective actions. This creates cognitive space for strategic orchestration and stakeholder alignment — the distinctly human aspects of programme leadership.

Over the past few years, I have started to treat programme management itself as an ideal candidate for agentic augmentation, by progressively “agentifying” the PMO work my team and I were already running, rather than inventing a greenfield system. I layered simple agents on top of the Jira boards, Confluence pages, Google Sheets dashboards, and vendor reports we were already using. These agents scraped sprint boards, RAID logs, and financial trackers, auto‑generated RAG summaries, highlighted emerging risks and dependency clashes, and produced draft weekly and monthly status packs for my delivery managers and me to review rather than compile from scratch. That shift freed a meaningful share of my week from manual reporting and slideware, allowing me to focus on pattern recognition across programmes, re‑prioritising roadmaps, protecting PL margins, and having the difficult conversations with C‑suite stakeholders when the data showed structural risk to outcomes.

The pattern is consistent: agents deliver maximum value when they are embedded into core processes, where they handle complexity at scale, where human oversight is embedded but not bottlenecked, and where the process itself is redesigned around agent capability rather than simply automating existing procedures.

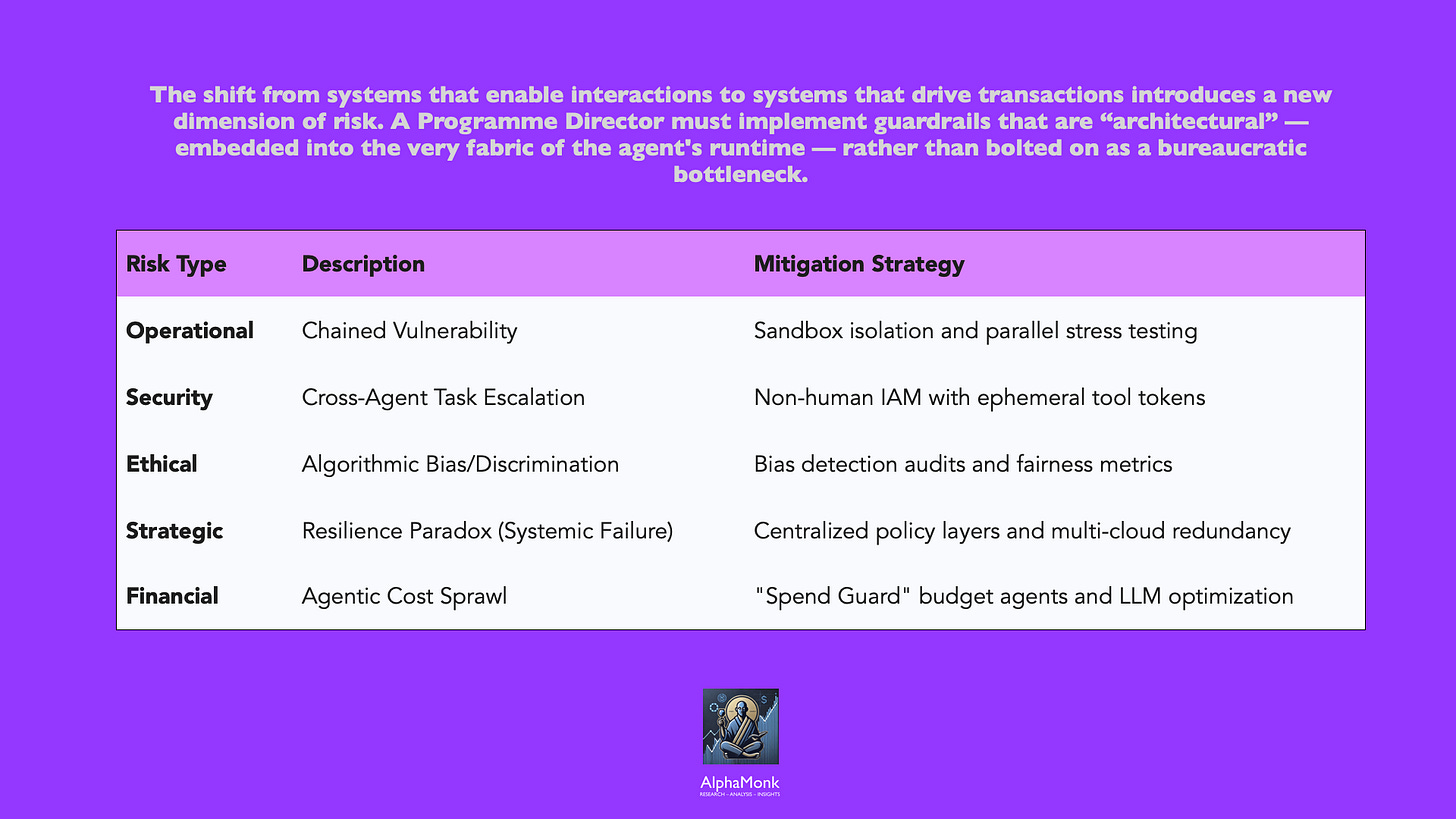

Risk, Control, and Governance in an Agentic World

The temptation when facing new risk is to impose heavy controls and slow deployment. This is the path to pilot purgatory. Agentic AI deployments move at machine speed, and governance cannot lag too far behind, or it becomes a bottleneck that erodes value.

The path forward is not to eliminate risk but to classify it, match it to guardrails, and create safe zones where innovation can move quickly.

Operational risk is perhaps the most familiar. What happens if an agent makes a bad decision at scale? If a procurement agent approves inappropriate suppliers, or a customer service agent refunds unjustifiably, or a loan agent approves overly risky credit, the consequences can be significant. The control is not to prevent agent autonomy, but to define decision boundaries, embed approval gates at critical junctures, and monitor agent behaviour continuously. An agent approving invoices under £5,000 operates within a defined boundary; escalation to humans occurs for higher amounts. This is not a constraint on the agent; it is clarity on its role.

Data and security risk takes on new dimensions in an agentic world. Agents access systems, read files, and exchange data with other agents, often without explicit human instruction. The risk is not speculative; 80 per cent of organisations report encountering risky agent behaviours, including improper data exposure and unauthorised system access. The control is identity and access management at an agent level — ephemeral, just-in-time access for specific tasks, not broad standing permissions. It is also observability: logging agent actions, decisions, and data exchanges so that unusual behaviour is visible. And it is architecture: using an MCP (Model Context Protocol) gateway to mediate agent access to tools and data, applying fine-grained policies at a central point rather than distributing policy enforcement across hundreds of agents.

Ethical and reputational risk emerges when agents make decisions that are biased, discriminatory, or inconsistent with organisational values. A hiring agent that systematically screens out candidates from certain backgrounds. A lending agent that applies different criteria to different demographic groups. A customer service agent that is rude or threatens customers (both of which have occurred in actual deployments). The control is not to prevent agent decision-making but to measure it, audit it, and correct misalignment before it cascades. This requires establishing clear thresholds for bias, fairness, and brand alignment, and monitoring agent outputs against those thresholds continuously.

Organisational risk manifests when agents proliferate without coordination, duplicating effort, creating conflicting decisions, or accessing data and systems in ways that violate governance. This is the risk of agent sprawl: uncontrolled proliferation of ungoverned, redundant systems. The control is portfolio management—a central inventory of all agents, their capabilities, their access rights, their performance metrics, and their dependencies. It is also architectural reuse: establishing patterns, templates, and pre-built components so that new agents inherit security, observability, and compliance controls rather than building them from scratch.

The governance model that succeeds is one that is built into the platform, not bolted on afterwards. Key governance pillars for safe scaling include:

Agent Classification System: Not every agent is a “snowflake” requiring identical approval processes. We must match the level of autonomy to the risk profile, allowing low-risk agents to deploy in sandboxes while high-risk agents face rigorous “human-in-the-loop” decision gates.

The Agentic AI Mesh: A modular, distributed architecture that provides observability and traceability. Every reasoning trace, tool invocation, and decision outcome must be logged to ensure auditability and root cause analysis.

Kill Switches and Fallbacks: Every critical agent must have a termination mechanism. We must engineering “graceful degradation”—ensuring the business continues to function even if an agentic squad is taken offline.

Explainability by Design: In regulated sectors like finance and healthcare, decisions must stand up to audit. We must invest in “system-2 thinking” models that can explain why an agent denied a loan or changed a price.

Governance provides accountability, not fear. By establishing clear "Statement of Work" (SOW) boundaries for agents and aligning with emerging UK regulations like the Cyber Security and Resilience Bill, the programme creates an environment where innovation can scale safely.

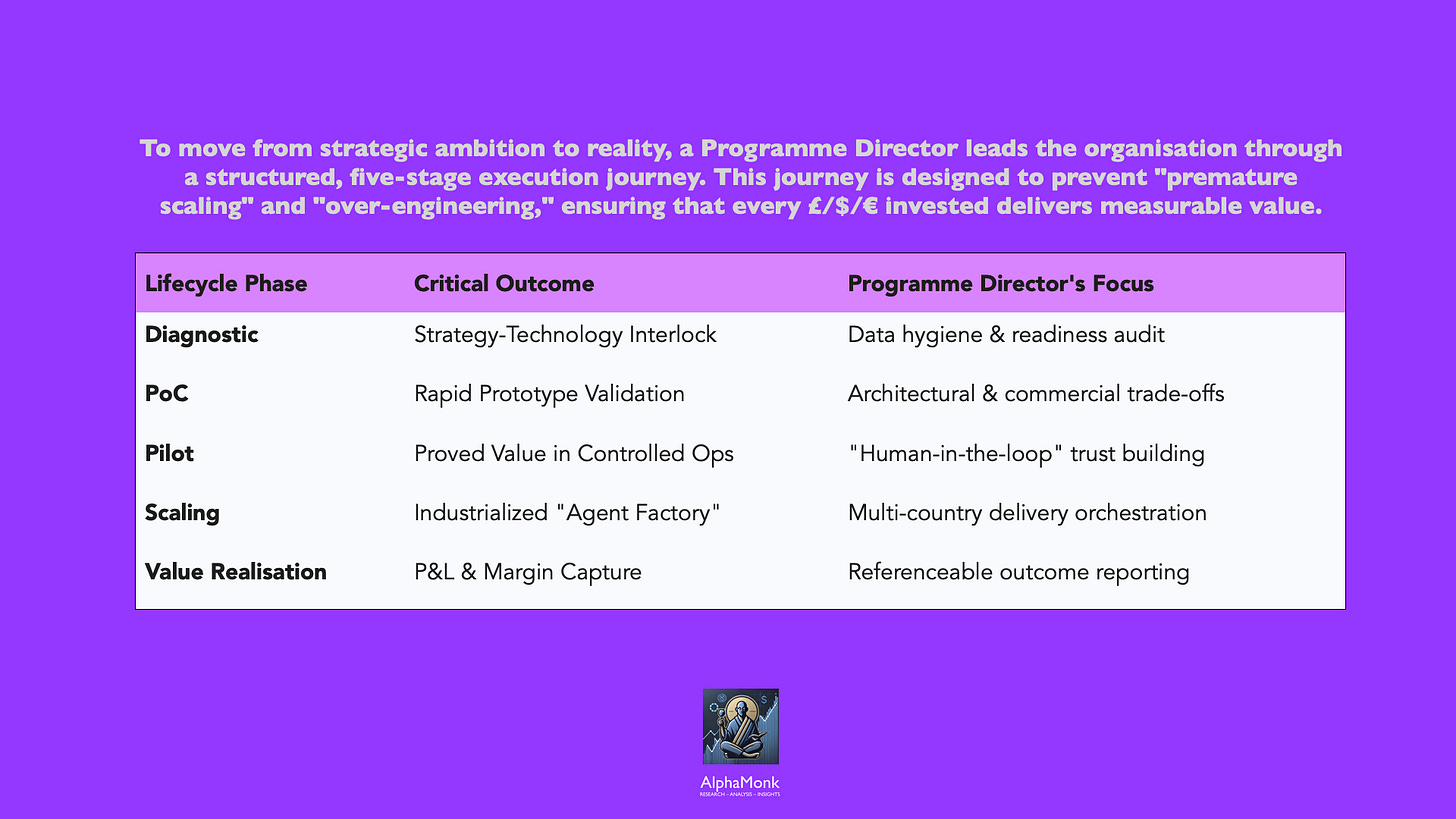

From Ambition to Reality: The Delivery Lifecycle

The journey from strategy to execution follows a clear architecture.

Diagnostic Phase: Understanding readiness is essential. Before a single line of code is written, we must understand our constraints. This is not a technical assessment alone. This phase is about " CxO alignment" and identifying the high-impact "vertical" domains where Agentic AI will define the next decade of competition. It is an assessment of

data maturity (What data is available? How clean is it? How accessible?),

operating model readiness (Are we prepared to redesign processes? Do we have the capability?),

governance readiness (Do we have risk frameworks that account for agentic AI? Can we make decisions quickly?), and

cultural readiness (Will colleagues embrace this, or will we face resistance?).

The diagnostic outputs inform prioritisation, sequencing, and resource allocation. A Programme Director establishes credibility by being honest about constraints and not optimistic about capability. They then set a baseline for progress and identify the necessary capabilities for successful ambitious deployment.

Proof of Concept Phase: The goal is learning, not deployment. We must "learn fast without false confidence." Using an "AI Incubator" or "Growth Lab" approach, we rapidly prototype solutions in weeks. A PoC is thus deliberately narrow in scope — a single process, a limited set of users, a controlled environment. The aim is to answer specific questions:

Does agent autonomy work in this context? What decision boundaries are required? What quality gates matter? Where does the agent fail? How do users respond?

The objective is to validate architectural trade-offs and test basic agent reasoning before committing to large-scale engineering. The temptation is to expand scope quickly (“If it works for HR, let’s do payroll too”); the discipline is to resist expansion until learning is complete. A PoC typically runs 8–12 weeks. It is not a pilot; it should not live in production, and results should not be extrapolated without validation.

Pilot Phase: Pilots differ from proofs of concept in a critical way: they operate in live business environments with real users, real data, and real consequences. The objective is to prove value at small scale before committing to enterprise-wide rollout.

A customer service pilot might target a single geography or customer segment. A procurement pilot might start with a single category of spend.

The pilot operates under close supervision: user feedback is gathered in real time, agent behaviour is monitored daily, and issues are resolved rapidly. A pilot typically runs 12–16 weeks. The exit criteria are clear: percentage of transactions handled autonomously, quality metrics (error rate, satisfaction, compliance), operational metrics (cycle time, cost), and user adoption. If a pilot does not meet exit criteria, the decision is explicit: iterate and re-pilot, or retire the use case.

Scaling Phase: Scaling is industrialisation, it is where most AI programmes falter. This is where process is standardised, training is built at scale, technology infrastructure is hardened, governance is operationalised, and change management is executed across the full footprint. What worked for 50 users in one location often breaks when extended to thousands of users across multiple geographies, operating models, and regulatory contexts.

Scaling is not rollout; rollout assumes the system is ready, and scaling makes it ready.

A scaling programme typically runs 4–6 months for a significant function, and includes technical work (environment hardening, load testing, failover procedures), operational work (training, documentation, support structures), change work (stakeholder communication, user readiness), and governance work (compliance sign-offs, risk acceptance, escalation procedures). The discipline here is to not skip steps because they seem tedious. The organisations that fail at scale are those that skip change management, assume teams will figure out how to work differently, or treat governance as a checkbox.

Value Realisation Phase: This is perhaps the most neglected. Technology deployment is not the end. It is the beginning of the value realisation phase.

A programme should define expected benefits upfront (cost reduction, cycle time reduction, quality improvement, revenue growth) and establish mechanisms to track realisation. This requires baseline measurement (What was the state before? How long did processes take? How much did they cost?), post-deployment measurement (What is the state after?), and attribution (How much of the improvement is due to the agent, versus other changes happening in parallel?).

Value realisation tracking continues typically for 6–12 months post-deployment. It is here that the programme either demonstrates success and secures funding for expansion, or reveals shortfalls and informs course correction.

Throughout this lifecycle, the Programme Director prevents common failure modes: pilot purgatory (endless pilots without escalation to production), scope creep (expanding a pilot beyond its original intent), over-engineering (building capability far beyond current need), premature scaling (rolling out before readiness is established), and value atrophy (realising benefits upfront but losing them over time as the organisation regresses to old ways).

The Role of the Programme Director in the Age of Agentic AI

The Programme Director’s role is amplified and redefined.

Traditionally, a Programme Director orchestrates resources, manages timelines, and mitigates risks. These capabilities remain essential.

In an Agentic AI world the focus shifts from one of control to one of “strategic orchestration”. We are no longer just managing tasks; we are managing “digital identities” and “goal alignment”. Leadership credibility matters more than technical depth. As someone who has spent years as the “primary point of escalation” for CTOs and Product Heads, I know that success depends on the ability to lead with confidence in unfamiliar environments.

Orchestration involves managing the “distributed delivery” of multi-country engineering squads, mediating cross-timezone friction, and ensuring that the “agentic engine” is pursuing the right goals. We must be “appropriately disruptive,” challenging business unit leaders to look beyond today’s operating model and reimagine entire value chains.

The Programme Director acts as the “advisory bridge” between high-level strategy and depot-level operations. We shape and define Statements of Work that specify agent autonomy levels, and ensure that every initiative is “reference-able, predictable, and profitable”. We are uniquely positioned to make AI adoption successful because we understand the “entire picture”— from the data pipelines in India to the regulatory constraints in London and the union concerns at the regional depots.

Our role is to turn everyone into an “agent leader”. We must role-model the change, demonstrating how we use AI to handle our own “manual toil” in PMO reporting and Gantt management, thereby freeing ourselves for strategic advisory work.

Ultimately, we are the custodians of the transformation’s “North Star,” ensuring that the organisation skates to where the puck is going to be.

Leading with Intent, Not Technology

Agentic AI success is not a technology problem. The technology works. Models are improving rapidly, tools are maturing, and capability is accelerating. The problem is organisational.

It is the problem of translating ambition into execution at the right pace, with the right governance, in ways that people embrace rather than resist. It is the problem of balancing innovation with control, autonomy with oversight, speed with rigor. It is the problem of redesigning processes and operating models, not just deploying software.

This is precisely the domain in which Programme Management discipline delivers disproportionate value. The organisations that will succeed are those where Programme Directors sit at the strategic table, where governance is built in, where process redesign is taken seriously, where colleagues are co-creators rather than subjects of change, and where value realisation is measured and held accountable.

Agentic AI will reshape how organisations compete, how work is structured, and how value is created. That reshaping will not happen through technology alone. It will happen through disciplined, strategic, human-led programme management that translates strategic intent into operational reality.